How to performance-tune ruby on rails applications, or how to troubleshoot slow queries and other performance issues with ruby on rails, mongodb and mongoid

One of the projects we are currently running for one of our clients is upgrading a ruby-on-rails application from ruby 2, to ruby 3. There are several friction points that we have encountered, one of which has been the observiation of degraded performance across the app.

It is unfortunate that an upgrade of the major version of a language, causes greater resource consumption. We prefer our stack to remain lean - if we don't need something, we tend not to allocate resources to it. The motivation for language upgrade has been, to keep in-step with the slow of time, and to promote security. Functionality-wise we don't anticipate using any new language features. So from point of view of performance and resource use (and therefore cost), we actually prefer old versions of the language.

Meanwhile, as part of this process we're increasing the in-house knowledge on how to troubleshoot performance issues. We are documenting our discovery and outcomes, building a shared reference for future diagnostics.

~ * ~ * ~ * ~

There are three areas where we evaluate application performance: the http server (nginx), the application code (ruby on rails) and the database (mongo, mongoid).

Database level

On the database level, every query should use an index. For mongo, there is a limit of 64 indexes per collection - but we very rarely have that many indexes. Therefore, it is encouraged to create indexes, and composite indexes, for every query on every page.

Ruby allows database logging to a separate file. To enable it, add this config to the environment config file:

config.mongoid.logger = Logger.new(Rails.root.join( 'tmp', 'mongo.log' ))

config.mongoid.logger.level = Logger::DEBUGMongoid logging can also show caller location if you enable it:

Mongo::Monitoring::Global.subscribe(Mongo::Monitoring::COMMAND) do |event|

Rails.logger.debug("Mongo Query: #{event.command}")

endThe file would then be populated with query json objects, and execution duration in milliseconds. Here is an example output line:

D, [2026-03-16T19:22:52.134736 #1235765] DEBUG -- : MONGODB | 127.0.0.1:27017 req:2092 conn:1:1 sconn:284689 | creek_staging.find | STARTED | {"find" => "job_numbers", "filter" => {"arch_state" => {"$in" => [nil, :active]}}, "sort" => {"number" => 1}, "$db" => "creek_staging", "lsid" => {"id" => <BSON::Binary:0x22736 type=uuid data=0xa2928481e2a744e2...>}}

D, [2026-03-16T19:22:52.211293 #1235765] DEBUG -- : MONGODB | 127.0.0.1:27017 req:2092 | creek_staging.find | SUCCEEDED | 0.076s

More interestingly, the database itself provides a profiling capability. To enable it, start mongosh (or mongo if you're running an older version) and run:

## in mongosh:

use <your-database> ;

db.setProfilingLevel(1, { slowms: 100 })

db.system.profile.find().sort({ ts: -1 }).limit(5)This means:

- 0 → profiling off

- 1 → log slow queries only (recommended)

- 2 → log all queries (very heavy, avoid in production)

Application Level

The gem 'rack-mini-profiler' has been useful. You can install it in a development (or staging, etc) environment like so:

## Gemfile

group :development, :staging do

gem 'rack-mini-profiler', require: false

## ...

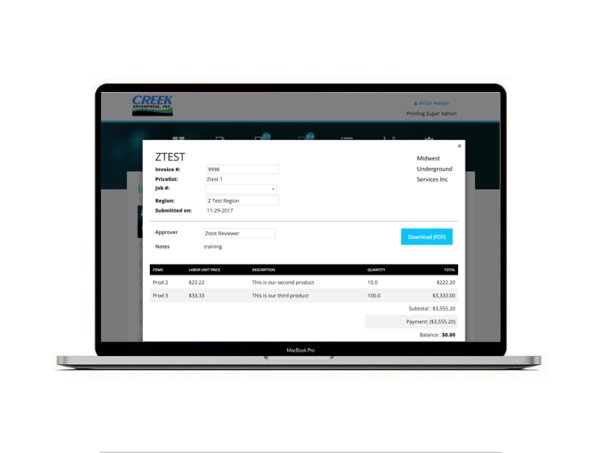

endThe UI of the profiler should then be available in the top-left of each page. The profiler UI lists an unuseful stack trace, but also useful duration of the query in milliseconds, and the full JSON of the query.

Particularly, on slow pages, some queries may be hilighted pink. There are suspicious or repeat queries - often indicating an N+1 problem. We have been able to catch several N+1 issues with this method.